Due to the growing importance of the privacy and data protection aspect of smart home and IoT devices, our tests have also evolved further and further in this direction in recent years. Where at the beginning of our IoT certification this topic was already included in the checks but did not yet have any critical relevance, today no product passes our tests without implementing a solid concept for the protection of users’ personal data. More specifically, this means that two critical points must be covered: First, the product handles personal data in a secure manner and protects it from access by any attackers. And secondly, the user is comprehensively informed about the collection, processing and possible sharing of his data via the product’s data privacy statement and can decide for himself on this basis whether its use is acceptable to him. However, we explicitly do not rate the amount of collected data and the associated impact on the user’s privacy.

Of course, this question is still very interesting for many users, and not everyone (or, to be honest, hardly anyone) takes a serious look at the associated privacy policy before using a new app in order to make an informed decision about whether to use it or not. This is exactly why we have designed our new Android App Privacy test format.

The idea here is a test that can be applied to any Android application and that examines and evaluates the data protection and the direct influence on the user’s privacy when using it. The focus here is explicitly not on the security of the respective application, as in our other tests, but on the user’s privacy towards the app operating entity. The test does not only apply to Android applications that belong to smart home or IoT solutions, but to any application in principle. The result of the test should then be a measurable value that allows different applications to be compared with each other and, if necessary, sorted. In order to keep the comparability as high as possible and also to provide the possibility of a high scalability, we have opted for a fully automated approach – this is fast and as objective as possible due to the elimination of any human interaction. In principle, the test is divided into three major areas, which are then linked together for the final evaluation in the final result. These three areas are: Static analysis, behavior analysis and privacy policy analysis.

Static analysis

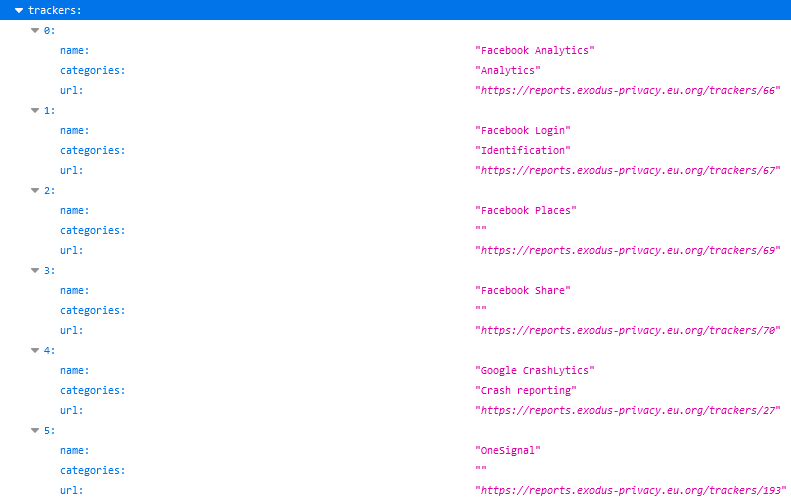

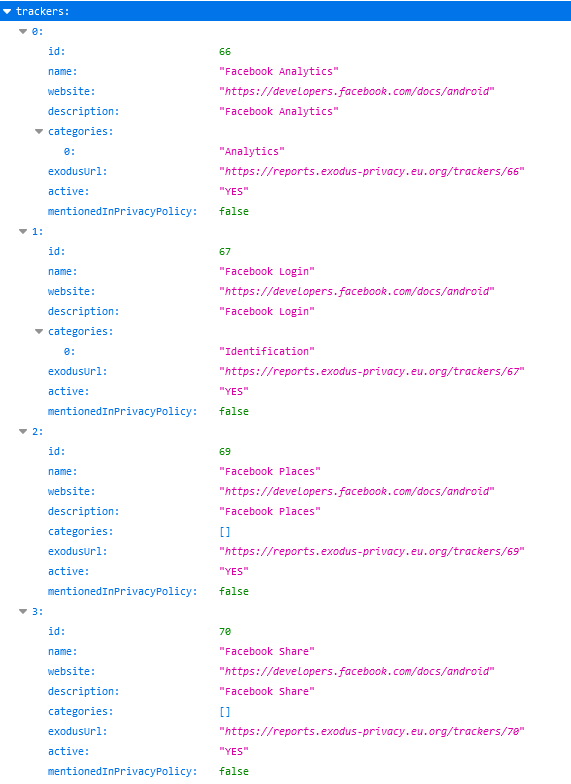

As with any other test, the first step is to collect all relevant information that we can obtain without actually running the application in question. This is mainly information such as requested permissions, included tracking and conspicuous third-party modules, as well as relevant and interesting areas of the application source code. These can be static URLs, references to communication to certain domains and the like. The goal here is to obtain an initial assessment of the theoretical possibilities and capabilities of the application in question, which can then be substantiated, proven or combined with the results of the practical behavior analysis.

Behavior analysis

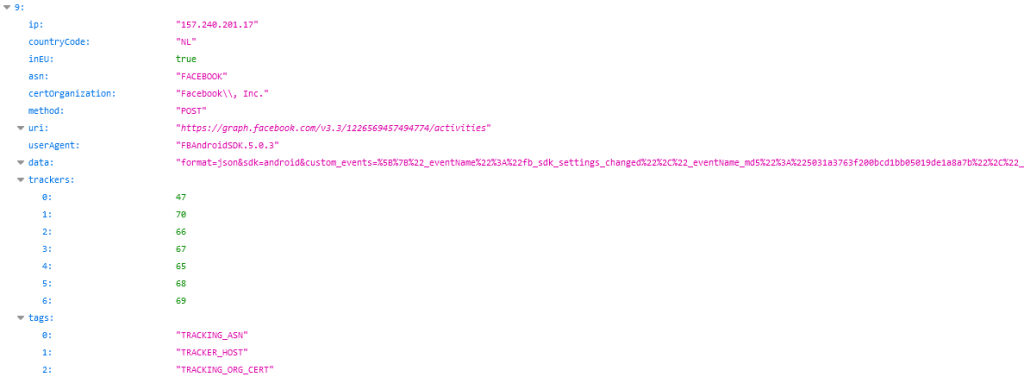

The most extensive and time-consuming area is behavioral analysis. The aim here is to examine the communication behavior of the application as comprehensively as possible and under realistic conditions. A simple observation of the communication of the app in an idle state is of course not sufficient here, since it can be virtually ruled out that all states and thus communication processes of the app are triggered this way. However, in order to be able to guarantee this with a relatively high probability, all app functions must be activated, i.e. simply put, each button must also be pressed at least once. For this purpose, we developed an additional application that automatically, recursively runs through the menu structure of the analyzed application, pressing each interactive UI element, such as buttons, sliders, links, etc., once. In parallel, all communication executed by the application is recorded. The result is a fairly comprehensive overview of the practical communication options of an app – if necessary, we can even associate individual actions on the UI directly with the corresponding triggered communication.

The recorded communication is then searched for interesting and relevant information. This way it is possible to determine the domains to which communication took place, the data and data volume transferred, and whether this data can be linked to information from the static analysis. For example, it is particularly interesting to see whether tracking modules that could be identified statically are also active in practice.

Privacy Policy

As mentioned earlier, the focus of the Android App Privacy test format lies on the actual, practical data collection and processing capabilities of an application. The privacy policy and the information provided in it are accordingly not of too much relevance for this test format – we want to analyze what the application actually does, not what the privacy policy claims it does.

Nevertheless, we cannot completely disregard it, as it can (and should) of course contain information, especially on issues of data processing and sharing with third parties, that cannot be fully derived from the pure communication behavior of the application. In addition, despite the focus set for the analysis, an application with a good privacy policy should still be found in front of an application with similar communication behavior but a poor privacy policy.

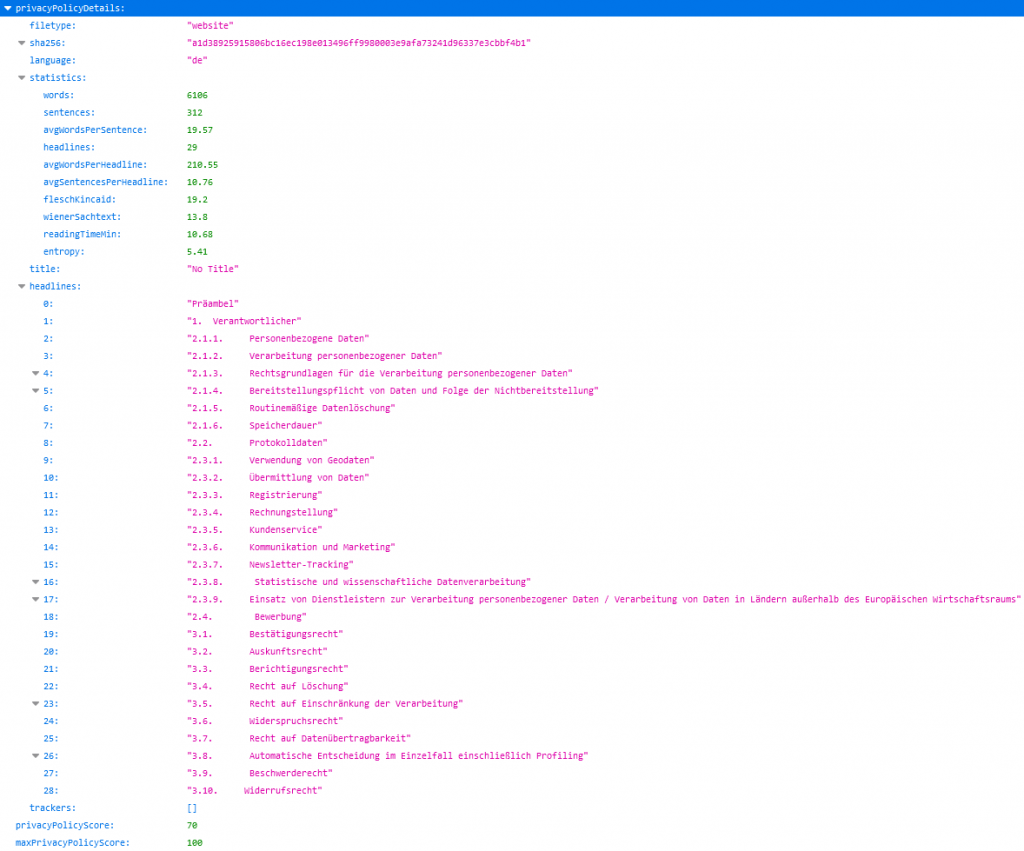

For the purpose of evaluating the privacy policy, we have accordingly also developed a fully automated text mining approach that allows us to extract essential information from the text and compare it with the findings from the behavioral analysis. Of course, an automatic approach has weaknesses here compared to a detailed, manual evaluation, but it provides a good indicator nonetheless. The additional statistical values recorded for readability, comprehensibility, and structuring, for example, also provide a good indication of how much effort was put into the creation of the privacy policy.

Evaluation

To evaluate the individual analysis steps, we have defined maximum achievable values that weight the different steps against each other and from which we subtract according to the negative points identified. The static analysis and the behavioral analysis are almost equal in this respect. Only the analysis of the privacy policy is weighted slightly lower for the reasons already mentioned above. The overall score is then simply the sum of the scores for all three analysis steps and a representation of the points achieved as a percentage of the maximum result. We are of course aware that reducing the test result to a single numerical value leads to a loss of detail when comparing two applications. Nevertheless, as with the other test formats, we would like to leave open the possibility of presenting the result at a glance and displaying it on a seal. This way, even less experienced users can make a direct assessment. Of course, there will also be posts on interesting tests in the new format, which break down the result into its parts and provide a deeper insight. In addition, we are planning further posts with more details on the topic of “How and why is testing done in the Android App Privacy test format?” beyond this first, short introduction.